123B has emerged as a pivotal significant advancement in the realm of language modeling. This monumental model, with its vast number of parameters, exhibits an unprecedented ability to interpret and generate human-quality text. Researchers are eagerly exploring 123B's potential in a multitude of applications, ranging from text summarization. Its transparent nature further promotes collaboration and innovation within the AI community.

- As a result, 123B is poised to revolutionize the way we interact with machines, paving the way for more seamless and intelligent AI systems.

Exploring the Capabilities of 123B: Text Generation and Beyond

The emerging language model 123B has been making headlines in the AI community with its impressive potential. Primarily known for its exceptional text generation proficiency, 123B can generate human-like text on a wide range of topics. However, its reach extends far beyond straightforward text production.

- 123B's complex architecture allows it to analyze contextual information within text, enabling it to participate in meaningful dialogues.

- Its extensive training dataset has empowered it with a broad knowledge base, enabling it to answer thorough inquires on diverse subjects.

- Furthermore, 123B shows potential in domains such as summarization, conversion, and even storytelling.

As research and development continue, the opportunities for 123B are limitless. This powerful language model has the ability to alter the way we communicate with technology and information.

Assessing Performance in Natural Language Understanding

The field of natural language understanding (NLU) is constantly evolving, with advanced techniques emerging regularly. To effectively quantify the advancement of these methods, comprehensive assessment tools are crucial. The 123B benchmark specifically aims to test large language models (LLMs) on a broad range of NLU problems. This encompasses tasks such as text categorization, question answering, and abstraction.

By offering a standardized set of guidelines for assessment, the 123B benchmark facilitates interoperability within the NLU community. Researchers and developers can benchmark the efficacy of different LLMs, point out areas for enhancement, and ultimately accelerate the field of NLU.

Fine-Tuning 123B for Specialized Tasks: Applications and Results

Fine-tuning large language models including the 123B instance has proven a powerful technique for obtaining state-of-the-art performance on a broad range of specialized tasks. This paper investigates the possibilities of fine-tuning 123B for numerous applications, demonstrating promising outcomes.

We perform a comprehensive study targeting on areas such as natural language generation, measuring the influence of different fine-tuning approaches. Our experiments illustrate that fine-tuning 123B can markedly enhance accuracy on these specialized tasks, often exceeding existing systems.

Furthermore, we investigate the effects of training optimization on fine-tuned results, providing valuable knowledge for practitioners.

Finally, we explore the challenges of fine-tuning 123B and outline future directions for further improvement.

Delving into the Architecture and Training of 123B

This paper/study/report provides a comprehensive analysis/exploration/examination of the architecture/design/structure behind the 123B language model, shedding light on its training process/methodology/techniques. We delve/explore/investigate into the layers/components/building blocks that compose/constitute/make up this powerful model/system/network, highlighting/discussing/revealing key decisions/choices/factors that led/contributed/resulted in its impressive performance/capabilities/abilities. Furthermore, we outline/summarize/explain the training data/dataset/input used to shape/influence/mold 123B's understanding/knowledge/comprehension of language.

- Through/By means of/Utilizing a detailed/thorough/comprehensive examination/review/study, we aim to provide/offer/present valuable insights/understandings/clarifications into the inner workings of 123B.

- This knowledge/information/understanding is crucial/essential/important for researchers/developers/engineers seeking to build upon/extend/improve this foundation/framework/platform.

Ultimately/Finally/In conclusion, this analysis/investigation/study sheds light/provides clarity/unveils the intricacies/complexities/nuances of 123B's {architecture and training process, offering a roadmap for future development in the field of large language models.

123B: Ensuring Ethical and Accountable AI Deployment

The proliferation of powerful language models like 123B raises significant ethical click here considerations that demand careful analysis. As we harness the capabilities of these architectures, it is crucial to establish responsible AI deployment. This requires a multi-faceted approach that contemplates issues such as bias, fairness, transparency, accountability, and the potential for manipulation. Deploying robust ethical guidelines and mechanisms is paramount to mitigate risks and promote trust in AI systems.

- Furthermore, ongoing evaluation and collaboration with stakeholders are crucial to resolve emerging ethical challenges and ensure that AI technology benefits society in a sustainable manner.

- Ultimately, the deployment of 123B and similar models should be guided by a strong commitment to ethical principles, promoting human well-being, and upholding societal values.

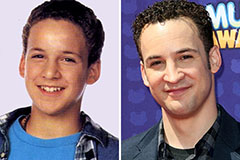

Ben Savage Then & Now!

Ben Savage Then & Now! Michael C. Maronna Then & Now!

Michael C. Maronna Then & Now! Tiffany Trump Then & Now!

Tiffany Trump Then & Now! Tonya Harding Then & Now!

Tonya Harding Then & Now! Naomi Grossman Then & Now!

Naomi Grossman Then & Now!